If the algorithms would be written in Python why would you not write the result as a pickle object (or any format your Python model would support)?

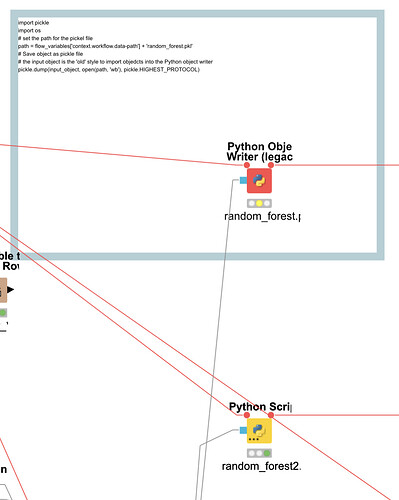

To have it in knime ‘style’ you could employ an individual node:

Maybe you can provide a sample workflow or a screenshot of your task so we better understand the challenge. What part will be in knime and what part in Python. If you use knime server you will have to make sure the Python on the server contains the same packages and setup as the desktop version.

If you want to use PMML that is also an option but you will be limited to models that would support this format.

Then just as a remark. Knime can work with Jupyter notebooks directly.