Hi @gab1one …

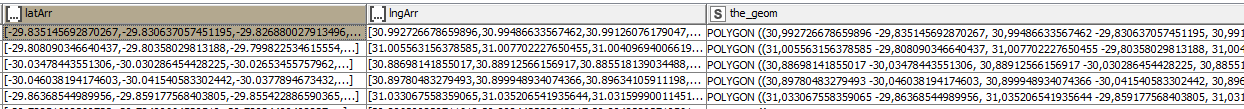

I found the problem… I have a java snippet in the Knime flow that takes the values in a Latitude array and Longitude array and creates a WKT polygon.

The values in the arrays are double with “.” as the decimal. For some reason in 4.4.1 when node java snippet creates the WKT polygon, which is a string, the “.” in the double is changed to a “,” is incorrect.

I don’t know why it does this in Knime 4.4.1, this does not happen Knime 4.3.4. I have not changed any of the code in the snippet.

Any suggestions why this might be happening?

tC/.