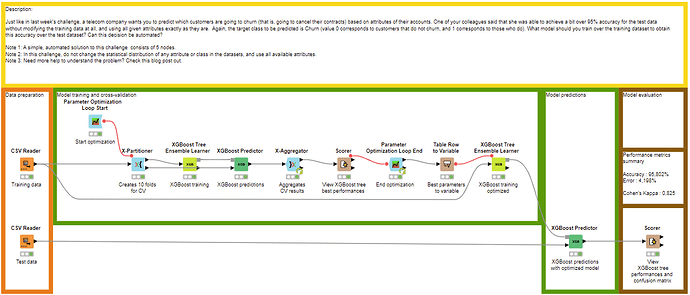

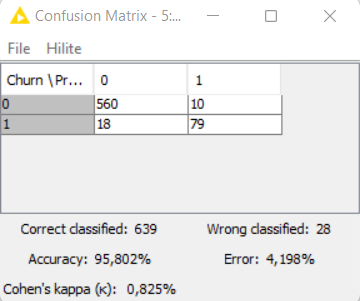

With a proper training-validation-test workflow (and still without data transformation, SMOTE or anything), always with XGBoost and the fine-tuning of same two hyperparameters and with (hopefully) all nodes with a fixed random seed, I can get an accuracy to 95,802%, exactly what you had previously ![]()

Here is the link to the workflow : justknimeit-23part2_optimization_Victor – KNIME Hub

Definitely out of scope for this challenge regarding the number of nodes, but still interesting to look at model optimization.

I prefer this optimization version than the simple one with the fine-tuning on test set (@Rubendg), as the final accuracy result obtained the simple way won’t be a fair metric/assessment : the test set, which is supposed to be independant from training and validation of a model to properly assess its performance on unseen data, is used to fine-tune a model, so the assessment is not reliable, independant and generalizable.

With the cross-validation on the training set, test set remains independant and only used at the end for model assessment, so the performance and accuracy metric is reliable.

Still, you can remove all the model optimization nodes to just keep the 5 main needed nodes (and properly configure optimized hyperparameters of XGBoost training node) to enter the challenge with a high accuracy ![]()