Hi Team,

I am trying to train a Time Series Model in KNIME AP, with the aim of deploying it to KNIME HUB in the near future.

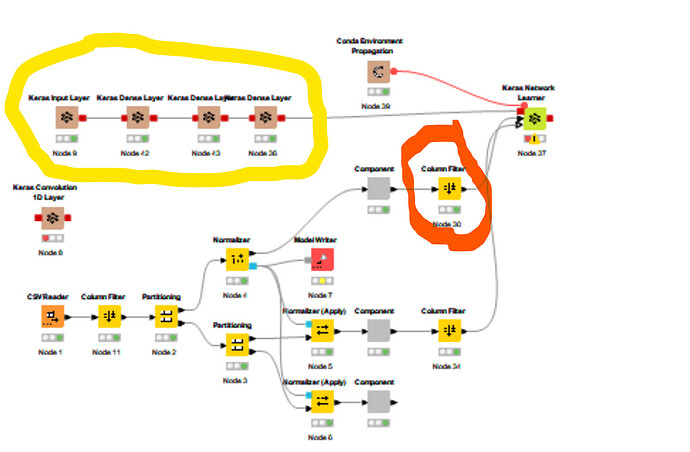

This is what the flow looks like:

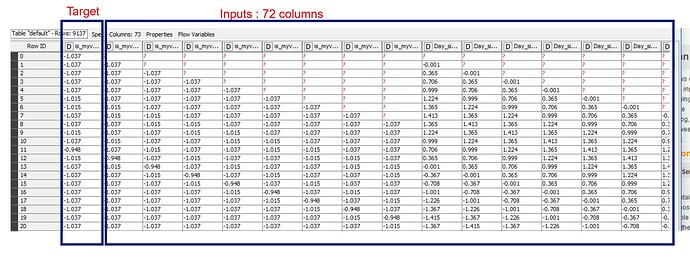

This is what my data looks like at the output table marked with red circle:

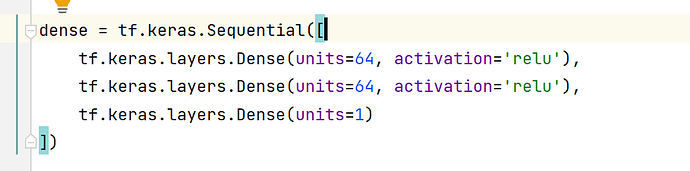

Before using KNIME I had prepared the model in my local laptop Pycharm and decided that I would like to try this dense keras model in KNIME AP first as a test:

Pic 1 shows my current workflow and the location of the Keras Dense nodes in yellow, I have ordered them as per what I learned from the community.

I need help in the following:

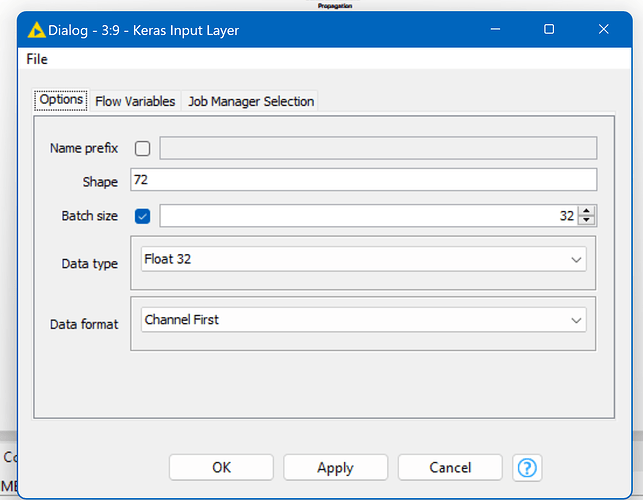

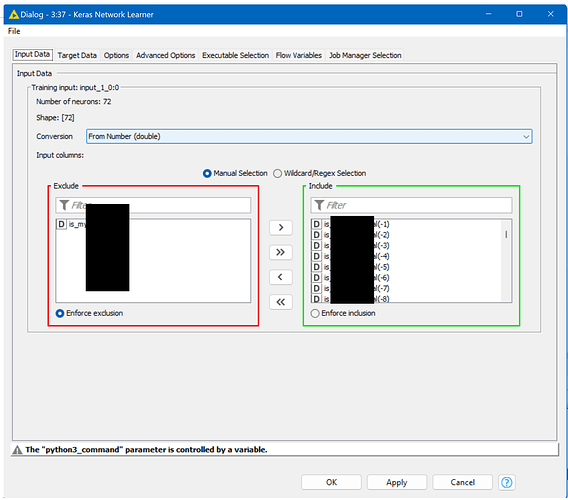

1. How to configure the keras input layer:

Current configuration:

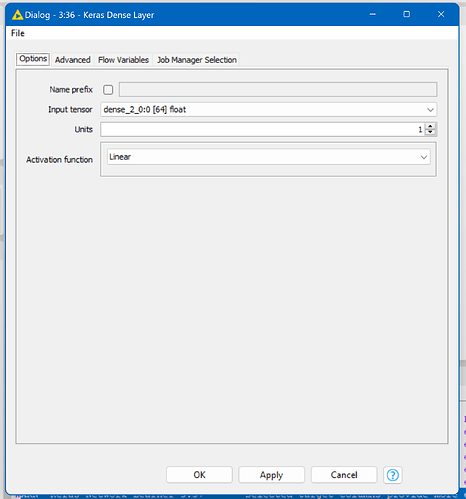

2. How to configure the rest of the layers as per the requirement. Below is the last layer's configuration:

This is error I am getting currently:

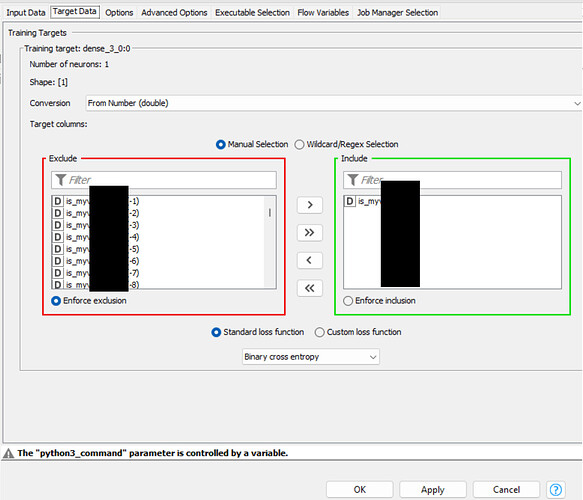

ARN Keras Network Learner 3:37 Selected target columns provide more elements (73) than neurons available (1) for network target ‘dense_3_0:0’. Try removing some columns from the selection.

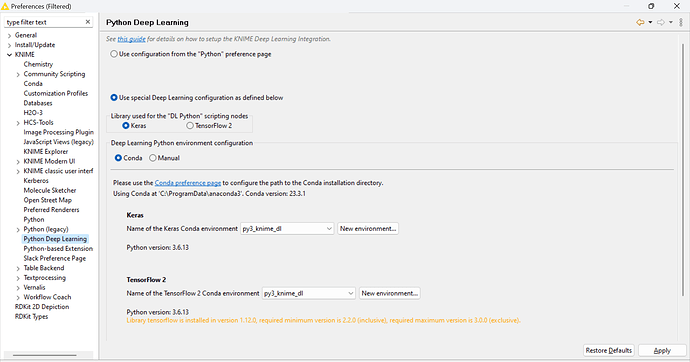

3. An answer to this question : Once I develop the model using the this technique I will be using the trained model it in a different workflow (local/server) for inference (let's call this inference workflow). Will the inference workflow need Python/conda to work. The training required me to install python and some dependencies and I am pretty sure my org will not have those in the HUB. So please let me know if we need to have conda for inference.