Hello KNIMErs,

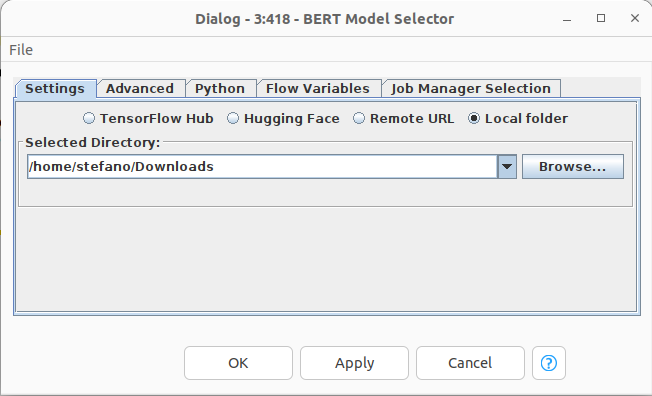

I can run successfully this workflow when bert_en_cased_L-12_H-768_A-12 is selected in the BERT Model Selector node.

Default model bert_en_wwm_cased_L-24_H-1024_A-16 triggers the following exception (both when using TensorFlow Hub and Remote URL options):

ERROR BERT Model Selector 4:418 Execute failed: Executing the Python script failed: Traceback (most recent call last):

File “”, line 2, in

File “path_to_KNIME/plugins/se.redfield.bert_1.0.2.202212230230/py/BertModelType.py”, line 42, in load_bert_layer

return model_type.load_bert_layer(bert_model_handle, cache_dir)

File “path_to_KNIME/plugins/se.redfield.bert_1.0.2.202212230230/py/BertModelType.py”, line 30, in load_bert_layer

return hub.KerasLayer(bert_model_handle, trainable=True)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/keras_layer.py”, line 153, in init

self._func = load_module(handle, tags, self._load_options)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/keras_layer.py”, line 449, in load_module

return module_v2.load(handle, tags=tags, options=set_load_options)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/module_v2.py”, line 92, in load

module_path = resolve(handle)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/module_v2.py”, line 47, in resolve

return registry.resolver(handle)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/registry.py”, line 51, in call

return impl(*args, **kwargs)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/compressed_module_resolver.py”, line 67, in call

return resolver.atomic_download(handle, download, module_dir,

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/resolver.py”, line 418, in atomic_download

download_fn(handle, tmp_dir)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/compressed_module_resolver.py”, line 63, in download

response = self._call_urlopen(request)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/site-packages/tensorflow_hub/resolver.py”, line 522, in _call_urlopen

return urllib.request.urlopen(request)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/urllib/request.py”, line 214, in urlopen

return opener.open(url, data, timeout)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/urllib/request.py”, line 517, in open

response = self._open(req, data)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/urllib/request.py”, line 534, in _open

result = self._call_chain(self.handle_open, protocol, protocol +

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/urllib/request.py”, line 494, in _call_chain

result = func(*args)

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/urllib/request.py”, line 1389, in https_open

return self.do_open(http.client.HTTPSConnection, req,

File “path_to_KNIME/plugins/se.redfield.bert.channel.bin.linux.x86_64_1.0.2.202212230230/env/lib/python3.9/urllib/request.py”, line 1349, in do_open

raise URLError(err)

urllib.error.URLError: <urlopen error [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1129)>

Any suggestion about how to tackle this issue?

Many thanks in advance,

-Stef