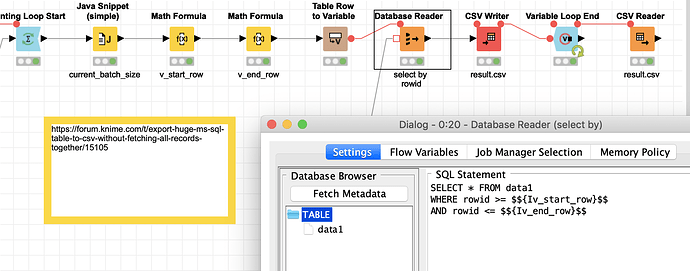

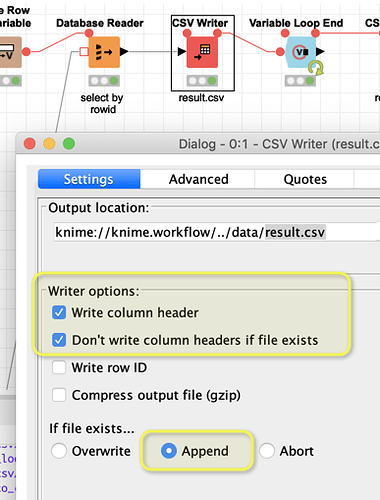

have you thought about doing it in chunks? And then appending the result to a CSV file. The example attached uses ROWIDs from SQLite. You could see if you either have a ROWIDs present or you could create one yourself. You can choose the size of the chunk/batch yourself (I use integers you might have to see if with very large numbers you might need big integers or something. Even a workaround with using strings might be possible).

Only other idea would be to write a CSV file directly on a Big Data (Hive) cluster, but that would not work with an MS sql table.

kn_example_huge_db_to_csv.knar (433.4 KB)