Hi @RIchardC , thank you for the info regarding the size of the input.

I have tested a few things using a PDF file of a book containing around 150k words from cover to cover, which is double the size you’re aiming for.

For the issue you’re solving, there’s already a built-in node in KNIME specifically catering for that; the Term Neighborhood Extractor Node.

I have used this node quite a few times eversince I started with Knime. The reason I asked you for the size is because I know that this node will have performance issues when dealing with cells with too many sentences. In our case, it’s even worse, since a PDF file of a book parsed by TIKA will result in, as you noticed it yourself, a compilation of all sentences in one cell.

For the Pdf file I mentioned earlier (with 150k words), running the node in 20 minutes showed no results, so I stopped it and I decided to find my own workaround.

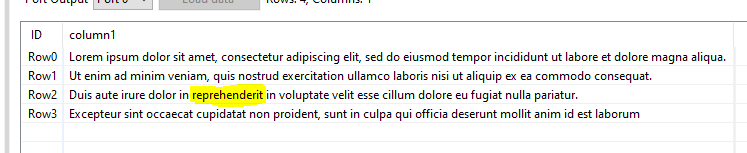

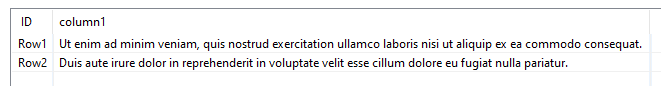

Basically, the workaround starts with converting that one all-inclusive cell to a column where each row represents one word, using space as the separator of rows. This can be done using the Cell Splitter Node, which in my case, took literally only 1 second. You’ll have to click the option of ‘List’ instead of ‘new columns’ in the configuration window of the Cell Splitter Node. You’ll end up with one row with a collection type, which you’ll be subjecting afterwards to the Ungroup Node. If you’re familiar with the Bag of Words Node, the resultant table looks similar. The only difference is that the BoW Node enlists all unique words, while the Cell Splitter enlists all available words according to the sequence that they appear in the book (Caution: A proper data cleaning must be made first before all of this, but that is another topic by itself).

From this list, you can start thinking of how to proceed. I have looked at your past threads in the KNIME forum, and I think you’ve had enough experience on how to proceed from here. The complete solution is doable, but unfortunately I won’t be available for the next 2 weeks. The other day when I wrote a reply to this thread, I had some free time.

In summary, you should now have a column of words ordered in sequence as they originally appeared in the book. That is all you need as a starter to create a workaround from.

I wish you all the best. If you still haven’t found a solution after 2 weeks from today, let me know by tagging my name here.