Thanks for the reply mlauber71.

So currently my machine has 32GB allocated for Knime. I can allocate more since I have 64GB machine.

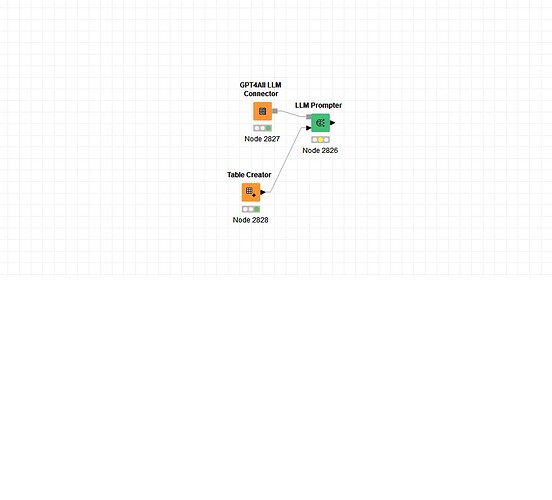

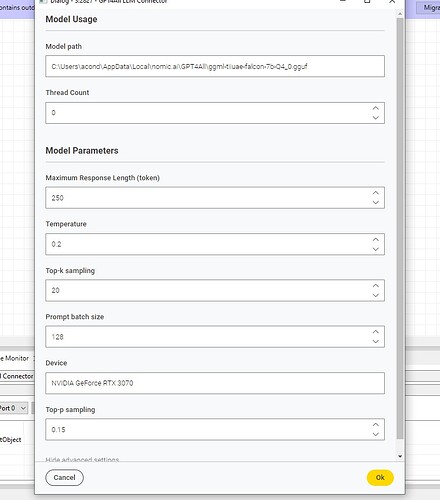

I have installed GPT4All in my machine and I can load the same models Im trying to load in Knime and it works just fine. Ive been able to use it there, but doesnt really help me much since I need it to run on Knime. The models Ive been working with so far are the falcon-7b-Q4 & falcon-newbpe-q4. Perhaps it is a memory thing, just wondering what the machine specs are for the other knimers in which this works.

I have all of the latest KI extensions installed, so I dont think thats the problem.

Ive looked at all of the available documentation on this but cant really find anything.

Any thoughts?

See below the log files

DEBUG NodeContainer Setting dirty flag on LLM Prompter 3:2826

DEBUG NodeContainer LLM Prompter 3:2826 has new state: CONFIGURED_MARKEDFOREXEC

DEBUG NodeContainer LLM Prompter 3:2826 has new state: CONFIGURED_QUEUED

DEBUG NodeContainer KOL_Map_V0.3_Mas_BETA 3 has new state: EXECUTING

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 doBeforePreExecution

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 has new state: PREEXECUTE

DEBUG LLM Prompter 3:2826 Adding handler beb8bba1-4ceb-42b2-aba2-4b86b2a4b60c (LLM Prompter 3:2826: ) - 24 in total

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 doBeforeExecution

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 has new state: EXECUTING

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 Start execute

DEBUG LLM Prompter 3:2826 Assigning temp directory to file store “dd6e59a3-d5c5-4c28-870f-8e171019b497 (GPT4All LLM Connector: C:\Users\acond\AppData\Local\Temp\knime_KOL_Map_V0_3_Ma_13684\fs-GPT4All_LLM_Connector-69518)”

DEBUG LLM Prompter 3:2826 Restoring file store directory “dd6e59a3-d5c5-4c28-870f-8e171019b497 (GPT4All LLM Connector: C:\Users\acond\AppData\Local\Temp\knime_KOL_Map_V0_3_Ma_13684\fs-GPT4All_LLM_Connector-69518)” from “E:\Knime Workspaces\KOL_ID\KOL_Map_V0.3_Mas_BETA\GPT4All LLM Connector (#2827)\filestore”

DEBUG LLM Prompter 3:2826 Connected to Python process with PID: 18460 after ms: 424

DEBUG DefaultPythonGateway Connected to Python process with PID: 25408 after ms: 419

DEBUG LLM Prompter 3:2826 Closing input stream on “C:\Users\acond\AppData\Local\Temp\knime_KOL_Map_V0_3_Ma_13684\knime_container_20240312_3249887834666782837.tmp”, 0 remaining

DEBUG LLM Prompter 3:2826 Closing file C:\Users\acond\AppData\Local\Temp\knime_KOL_Map_V0_3_Ma_13684\knime_container_20240312_1053865861540527679.knable (1 KB)

DEBUG LLM Prompter 3:2826 knime.api.types:The fallback value factory is used for the following type: {“value_factory_class”:“org.knime.core.data.v2.value.DefaultRowKeyValueFactory”}

DEBUG LLM Prompter 3:2826 knime.api.types:The fallback value factory is used for the following type: {“value_factory_class”:“org.knime.core.data.v2.value.StringValueFactory”}

DEBUG LLM Prompter 3:2826 knime.api.types:The fallback value factory is used for the following type: {“value_factory_class”:“org.knime.core.data.v2.value.DefaultRowKeyValueFactory”}

DEBUG LLM Prompter 3:2826 knime.api.types:The fallback value factory is used for the following type: {“value_factory_class”:“org.knime.core.data.v2.value.DefaultRowKeyValueFactory”}

DEBUG LLM Prompter 3:2826 knime.api.types:The fallback value factory is used for the following type: {“value_factory_class”:“org.knime.core.data.v2.value.StringValueFactory”}

DEBUG LLM Prompter 3:2826 knime.api.types:The fallback value factory is used for the following type: {“value_factory_class”:“org.knime.core.data.v2.value.StringValueFactory”}

DEBUG LLM Prompter 3:2826 knime.api.types:The fallback value factory is used for the following type: {“value_factory_class”:“org.knime.core.data.v2.value.StringValueFactory”}

DEBUG LLM Prompter 3:2826 knime.api.types:The fallback value factory is used for the following type: {“value_factory_class”:“org.knime.core.data.v2.value.StringValueFactory”}

DEBUG LLM Prompter 3:2826 ERROR: byte not found in vocab: ’

DEBUG LLM Prompter 3:2826 ’

WARN LLM Prompter 3:2826 Traceback (most recent call last):

File “E:\KNIME_5.1\plugins\org.knime.python3.nodes_5.2.1.v202402011442\src\main\python_node_backend_launcher.py”, line 720, in execute

outputs = self._node.execute(exec_context, *inputs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “E:\KNIME_5.1\plugins\org.knime.python.llm_5.2.1.202402071416\src\main\python\src\models\base.py”, line 298, in execute

llm = llm_port.create_model(ctx)

^^^^^^^^^^^^^^^^^^^^^^^^^^

File “E:\KNIME_5.1\plugins\org.knime.python.llm_5.2.1.202402071416\src\main\python\src\models\gpt4all.py”, line 240, in create_model

return GPT4All(

^^^^^^^^

File “E:\KNIME_5.1\bundling\envs\org_knime_python_llm\Lib\site-packages\langchain\load\serializable.py”, line 97, in init

super().init(**kwargs)

File “pydantic\main.py”, line 339, in pydantic.main.BaseModel.init

File “pydantic\main.py”, line 1102, in pydantic.main.validate_model

File “E:\KNIME_5.1\bundling\envs\org_knime_python_llm\Lib\site-packages\langchain\llms\gpt4all.py”, line 142, in validate_environment

values[“client”] = GPT4AllModel(

^^^^^^^^^^^^^

File “E:\KNIME_5.1\bundling\envs\org_knime_python_llm\Lib\site-packages\gpt4all\gpt4all.py”, line 101, in init

self.model.load_model(self.config[“path”])

File “E:\KNIME_5.1\bundling\envs\org_knime_python_llm\Lib\site-packages\gpt4all\pyllmodel.py”, line 262, in load_model

llmodel.llmodel_loadModel(self.model, model_path_enc)

OSError: exception: access violation reading 0x0000000000000000

DEBUG LLM Prompter 3:2826 reset

ERROR LLM Prompter 3:2826 Execute failed: exception: access violation reading 0x0000000000000000

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 doBeforePostExecution

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 has new state: POSTEXECUTE

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 doAfterExecute - failure

DEBUG LLM Prompter 3:2826 reset

DEBUG LLM Prompter 3:2826 clean output ports.

DEBUG LLM Prompter 3:2826 Removing handler beb8bba1-4ceb-42b2-aba2-4b86b2a4b60c (LLM Prompter 3:2826: ) - 23 remaining

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 has new state: IDLE

DEBUG LLM Prompter 3:2826 Configure succeeded. (LLM Prompter)

DEBUG LLM Prompter 3:2826 LLM Prompter 3:2826 has new state: CONFIGURED

DEBUG LLM Prompter 3:2826 KOL_Map_V0.3_Mas_BETA 3 has new state: CONFIGURED_MARKEDFOREXEC

DEBUG DefaultPythonGateway Connected to Python process with PID: 15612 after ms: 429