Often people participating in this forum need to share the structure and data types of a certain dataset, but cannot use the real data for confidentiality reasons. This makes it difficult to understand what the problem is and what solutions to propose.

It is very boring to write fake datasets by hand and in addition there is the risk of not generating large (pseudo) random data but only biased subsets.

Python provides various packages to create fake datasets, with varying degrees of complexity. Faker is simple to use and great at generating synthetic datasets with different data types and domains (phone numbers, addresses, male / female names).

Example

import pandas as pd

from faker import Faker

import random

fake = Faker()

rows=10

data = [{

'ID': fake.lexify(text='ID??????????'),

'First_name': fake.first_name(),

'Last_name': fake.last_name(),

"Birthdate": fake.date_between(start_date='-50y', end_date='-18y'),

"Address": fake.address(),

"Mail": fake.email(),

'Job':fake.job(),

'Company':fake.company(),

'Memorable_quote': fake.sentence(),

'Last_visit':fake.date_between(start_date = '-10d'),

'Genres': fake.words(nb=random.randint(1,5), ext_word_list=['Jazz', 'Pop', 'Rock', 'Classic',

'Blues', 'Contemporary folk', 'Electronic', 'Hip hop'], unique=True),

'Application': fake.file_path(depth=3, extension="pdf"),

} for y in range(rows)]

output_table = pd.DataFrame(data)

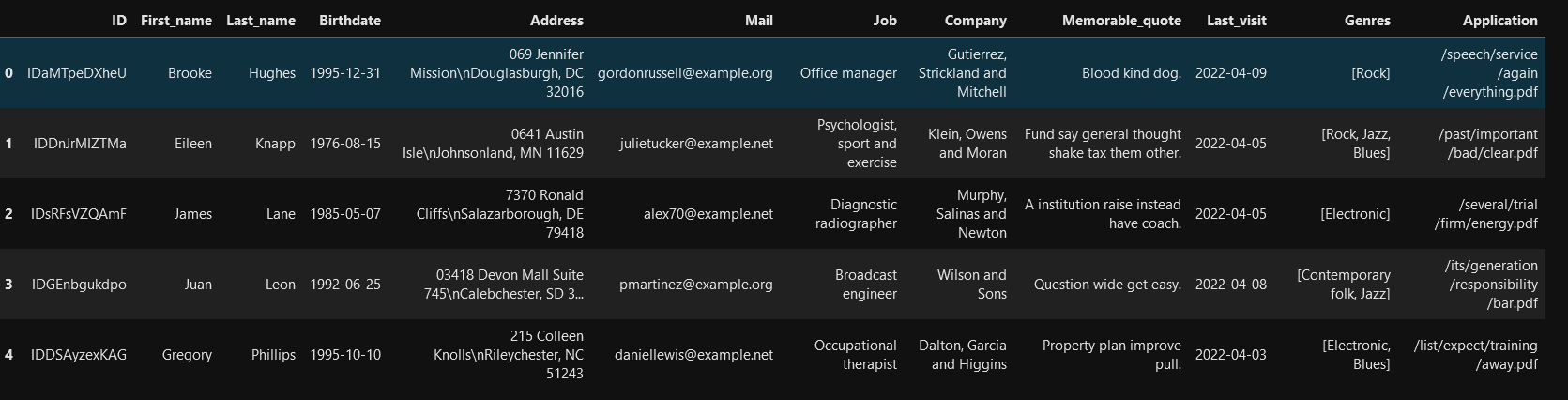

First rows of the dataset

The script can be run in a Python Script node, but, if the goal is to share the dataset, it is better to copy the data to a Table Creator node or save it in a file