Hi, I certainly know that Knime can import excel spreadsheets, but I cannot figure out to export said spreadsheet to a postgresql table. Is this possible?

Thank you so much,

SE

@Selster there is a dedicated connector for postgres. And a few examples on the hub.

To learn about KNIME and databases in general you might want to check the guide

https://docs.knime.com/latest/db_extension_guide/index.html?u=mlauber71#intro

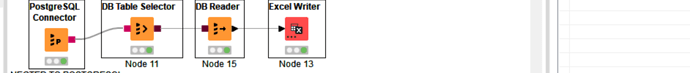

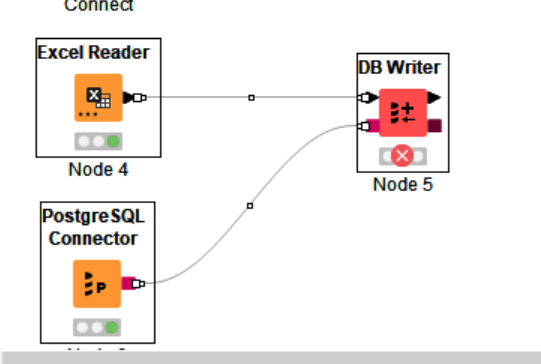

Thanks. I now see the PostgreSQL Connector and the DB Writer.

All I want to do is to send an excel spreadsheet to a postgreSQL table.

I don’t know how to paste my hypothetical workflow in here?, so I will write out my question:

Can I start with an Excel Reader (reading an excel sheet) and, separately a PostgreSQL Connector (that connects to a PostgreSQL database and table, and then “y” these directly into a DB Writer?

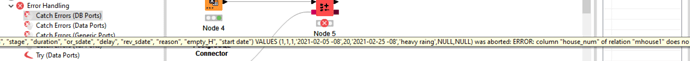

For some reason I am getting an error in the DB Writer. I can’t figure out how to copy the error message to a notepad. It is saying that there is an error is the first of the excel columns.

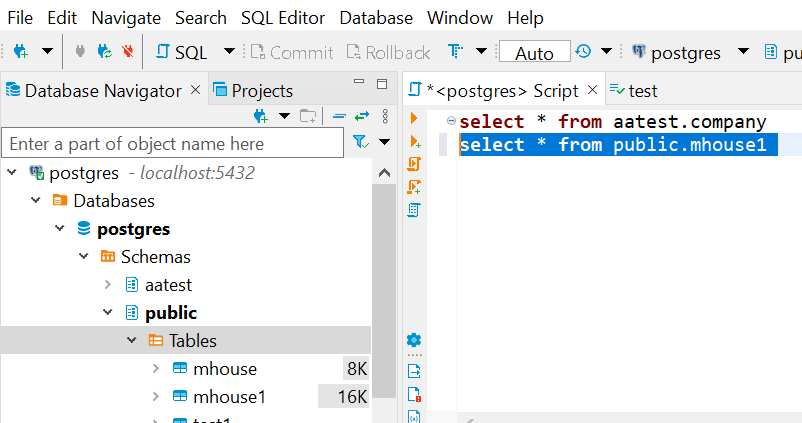

I found some errors in the postgreSQL table that I have corrected, I am still getting errors in DB write that other seem obscure. Essentially it would be good to have a node where I could test out a select table statement, before using the DB write node. Obviously the DB writer is doing both a create table and then generating the insert statements when attempting to write the postgreSQL table.

I corrected the errors and it is now working.

In the DB writer node, I enable overwrite the postgreSQL table, then it does not seem to matter if the existing postgreSQL column names match the ones in the excel file.

Thanks

This is going to be very useful

Insert a date-time to string node in between the excel reader and the db writer was also needed, and seems initially best when the date is converted to yyyyMMdd, otherwise more work will be needed before postgreSQL will directly accept the date, as seen in knime.

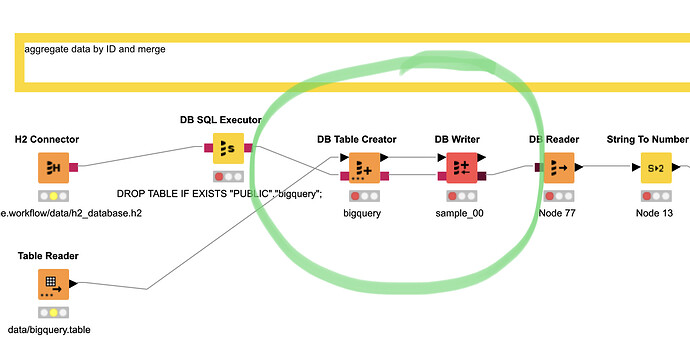

@Selster it might make sense to first create an empty table with the structure of the data you want to upload and then insert the data

Hi @mlauber71 , it’s not really necessary to create the table separately. If the db table does not exist, the DB Writer will create it and then insert into it. If the db table already exists, it will just insert into it. Of course in this case, the structure of the input data should match the existing db table’s structure.

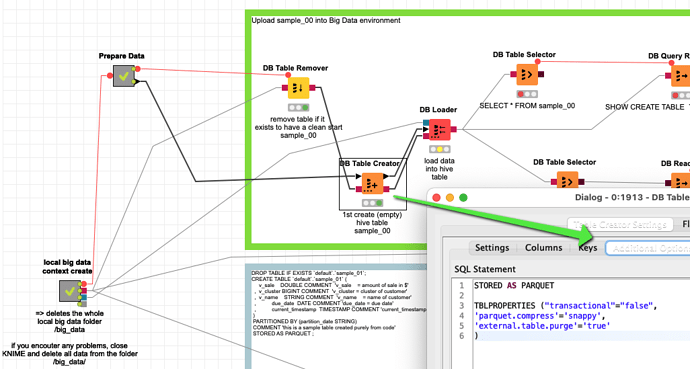

@bruno29a some databases like it that way. For Hive and impala it is the most stable (seems to depend on versions and default data structures like using external tables by default).

I wonder if other DBs might also benefit from a two step approach.

Hi @mlauber71 , interesting… I always thought it was Knime that was doing this (check if db table exists, if not exist then execute a create statement - probably could check this via the DB logs).

I did not know it depended on the DB system instead.

@bruno29a yes KNIME would take care of most of the DB operations and I think they have a lot of different DBs covered in their DB loader/table writer.

When it comes to big data formats there are a lot of special settings and problems that cannot be covered in a standard nodes that is supposed to work with every database and version.

Cloudera for example has introduced a whole lot of dependencies and compatibility issues in their version 6 so you will have to use some manual settings in your files that are not covered automatically by the DB Loader node.

https://docs.cloudera.com/documentation/enterprise/6/6.3/topics/impala_file_formats.html

=> if you throw in the ability to interact with Spark it gets even messier …

But the great KNIME software has a way for that also. You create the table with the additional settings (TBLPROPERTIES) and you are good to go. In the example for Hive I only used a few, there are more …

Also it might be that other databases might also benefit from creating a table first and then inserting. Also you can see what data type really has been created. That might be good if you have problems with data and time variables.

OK but we are getting away from the topic of this question. What I wanted to say: if you encounter problems try creating the empty table first with the help of KNIME

I think starting with the database table and its structure makes sense. In my case, I want to do some initial work in excel - possibly change columns, then import the sheet into Knime, and then export to postgreSQL. Do some work in sql, and then use Knime to bring it back to excel. But from the above examples, I now see that a lot of sql work can be done via Knime, much more than I had realized.

@Selster actually KNIME is really great at doing a lot of stuff to prepare and clean data. You can use SQL / database functions but also generic KNIME nodes to do that. If you come from Excel you might want to check out the resources below.

And as it is often the case with KNIME, as a platform you do not have to choose (or abandon Excel if you have great functions there) but you can integrate what you have (Postgres, Excel) and have it all. The above examples about Hive and Big Data might be a bit advanced if you just want to do some ETL, so you might want to start with something lighter.

There is a dedicated free book about migrating from Excel

And also this video “First Steps into KNIME Analytics Platforms for Excel Users”:

I come from dBase/foxpro and more recently postgreSQL. I use excel just because like so many computer users do, but it is sql I read and write. And I have often automated excel via foxpro. Foxpro has a strenght in that it allows one to mix programming code on the same page as sql, in the same interface. Does another IDE or language allow this? Pycharm, perhaps? Sometimes is seems frustrating that with python that one has to pass an sql statement to a variable before being able to execute it, so knime is so much better because the it looks like you can write an sql statement in one of the nodes, infortunately I don’t know how to do this. Perhaps knime sql is written in dbQuery? As far as Knime without sql, sometimes that can be frustrating since duplicating some sql statements can require so many knime nodes, I have really tried with knime sans sql, really.

If you like SQL you could just use it. You could use H2 or SQLite as local databases which live in memory or on a single file on your hard drive.

You could just load data there and do all the manipulation in SQL.

You can just write the code ‘free’ or use the prepared nodes for databases that would translate into SQL - but if you are familiar with it you might not need it.

Red DB connections would just transport the command to the database and you would have to do everything yourself (create tables etc) or the brown connections where you select one table and do the manipulations there.

This example is for a big data DB but the system and nodes are the same:

This topic was automatically closed 182 days after the last reply. New replies are no longer allowed.