I am trying to do something like what is being done [here], except that I am not working on a classification problem. I have a lot of missing values, and when I look at how well different models work (say regression vs a regression tree), they seem to work well for different types of models.

I am trying to find something that I can use to “fuse” the model estimations like it in the link, expect that I want to fuse and optimize fusion weights. I’ve thought about training a new model where the inputs are the predictions from the other model, but I don’t want to introduce error if it can be avoided.

The link got lost so it is not easy to understand what you want to do. Maybe you could elaborate further your problem.

Thanks for pointing that out. Here is a link to the community example:

https://hub.knime.com/knime/workflows/Examples/04_Analytics/13_Meta_Learning/04_Cross-Platform_Ensemble_Model*7o9ZabQ1LrMyp3Ca

I am trying to do something similar, but for regression. This example is trying to predict classes (and as far as I can tell the prediction fusion node only works for classification problems).

1 Like

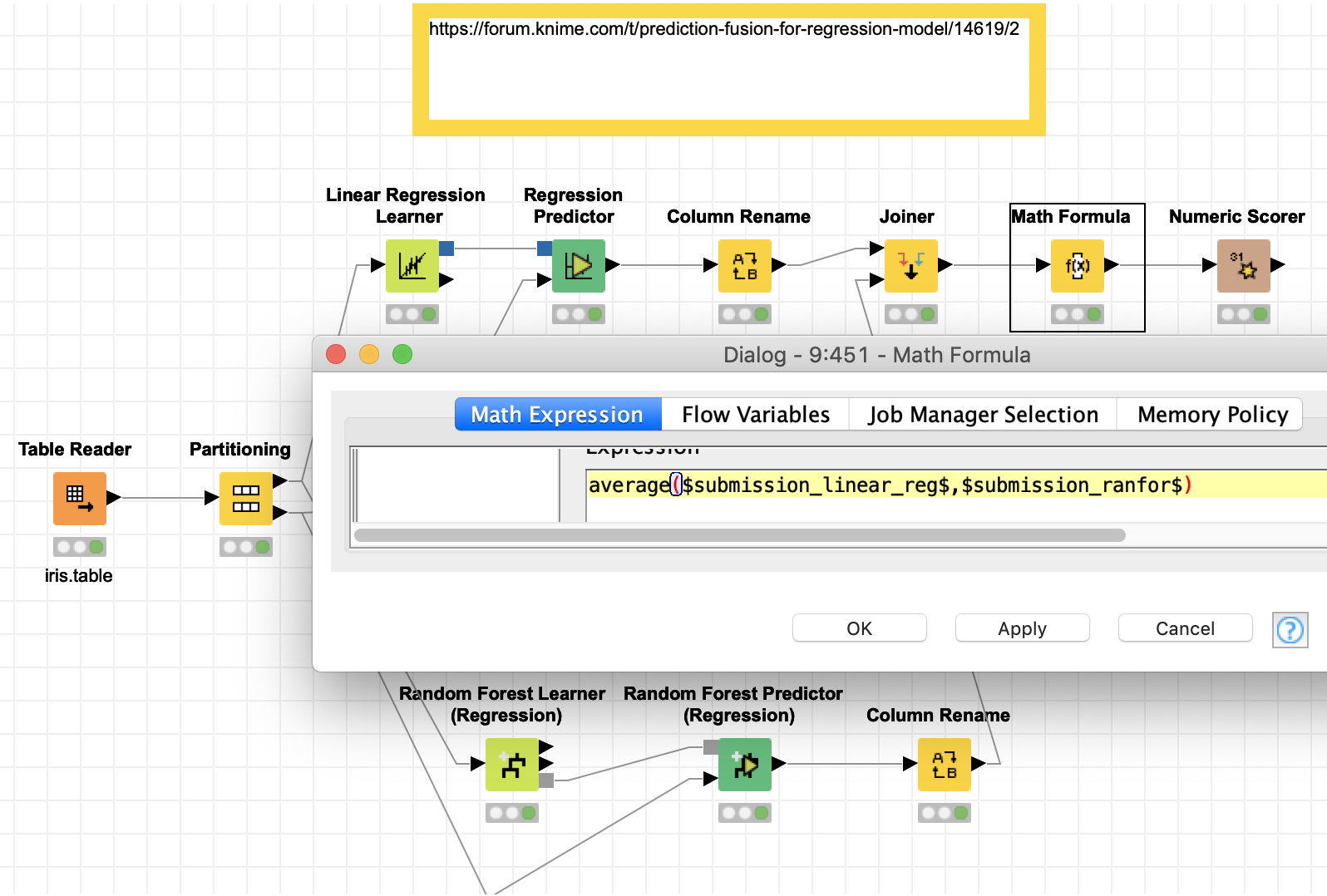

Have you thought about just using the average (median) of the various predictions?

m_040_combine_regression_models.knwf (196.2 KB)