Dear Carsten,

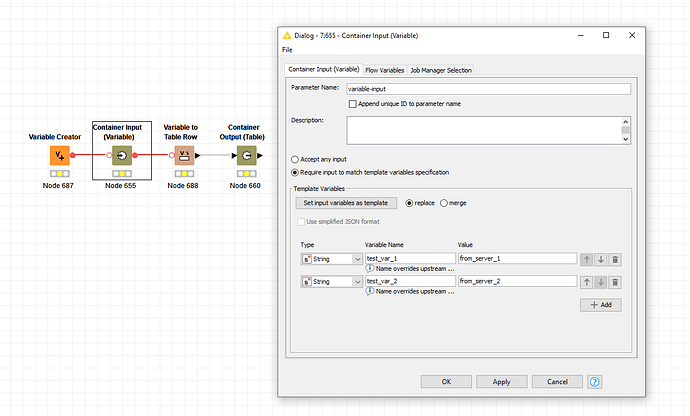

many thanks for your reply, we also thought about the option of using the combination of Container Input(Table) and “Table Row to Variable” node. Being able to use the Container Input(Variable) node via knimepy would have just made the implementation easier as we could have used the developed workflow (tested in production) as it is.

Nevertheless we will go with this approach and see how it works for us.

I would like to ask some associated questions here under this topic as they somehow go into the same direction:

1. Is there a full documentation of the KNIMEPY toolkit available?

The knimepy GitHub (GitHub - knime/knimepy) and the Blog (https://www.knime.com/blog/knime-and-jupyter) are great places to start with some examples but I missed to find a complete documentation of the available functions in the toolkit

2. Defining the input and outputs for multiple data tables:

Is there a way to adress a specific input or output Container table via the associated uniqueID?

So far there seems not to be to much control when e.g. using the following statements from Github:

with knime.Workflow(r"C:\Users\berthold\knime-workspace\ExploreData01") as wf:

wf.data_table_inputs[0] = input_table_1

wf.data_table_inputs[1] = input_table_2

wf.execute()

output_table = wf.data_table_outputs[0] # output_table will be a pd.DataFrame

I am refering to the answer given by potts in the discussion here: Using KNIME Workflows in Jupyter Notebooks - #6 by potts, especially the part where he mentions the functional apis (“run_workflow_using_multiple_service_tables()”) and attributes (“data_table_inputs_parameter_names”). Are there any code examples related to this?

3. Similar, what do the following expressions do?

-

wf.data_table_outputs[:] compared to wf.data_table_outputs[0]

import knime

with knime.Workflow("DemoWorkflow01") as wf:

wf.execute()

results = wf.data_table_outputs[:]

-

wf.data_table_inputs[:] compared to wf.data_table_inputs[0]

import knime

import pandas as pd

input_table_1 = pd.DataFrame([["blau", -273.15], ["gelb", 100.0]], columns=["color", "temp"])

# Requires a valid user account on a running KNIME Server instance.

with knime.Workflow(

"https://your_server.your_company.com/knime/your_directory/your_workflow_name",

username="arthur.dent",

password="forty-two"

) as wf:

wf.data_table_inputs[:] = [input_table_1]

wf.execute(reset=True, timeout_ms=10000) # Default timeout is usually plenty.

output_table = wf.data_table_outputs[0]

3. I get an error when using the option “live_passthru_stdout_stderr” in a jupyter notebook

Referring to potts answer here: Error when using knimepy from jupyter - #2 by potts

Some of the questions above may have been asked under different topics in the KNIME forums but I could find no answer there as well.

It would be great if somewhone as an answer or further information.

Thanks a lot.

Best

A.