Hello,

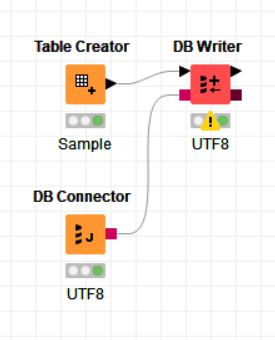

I am trying to insert data into Teradata Database, using DB Writer node. The text in the data includes some symbols like ™, č etc.

I am using the UTF-8 encoding for the database connection, as well as for the input excel file.

jdbc:teradata://192.168.1.1/DATABASE=DB_NAME,CHARSET=UTF8

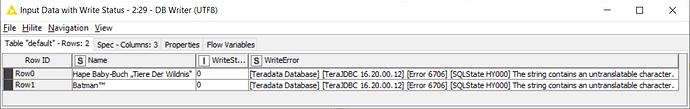

I get the following error:

[Teradata Database] [TeraJDBC 16.20.00.12] [Error 6706] [SQLState HY000] The string contains an untranslatable character.

However, if I use CHARSET=ASCII, i don’t get an error, but these characters are replaced by ‘?’

Thank you!

Nauman

Hello naumanahmad,

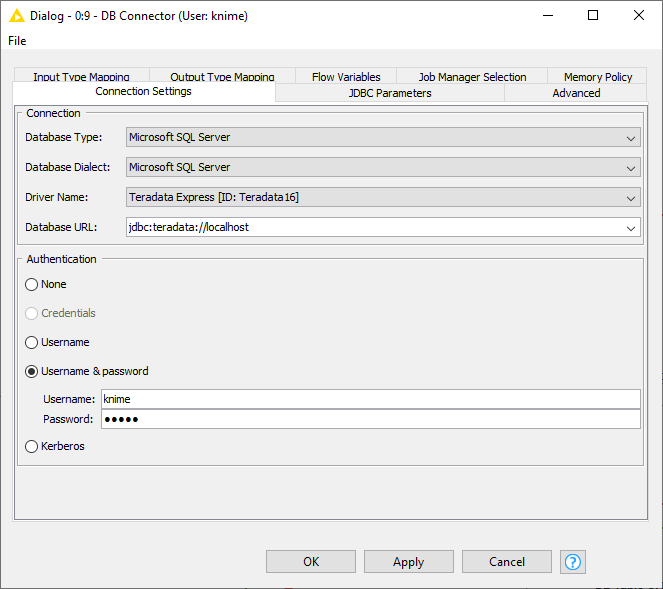

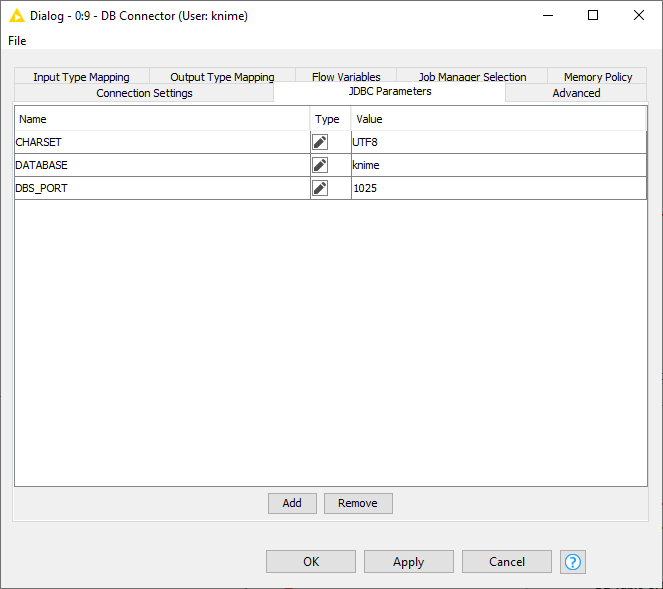

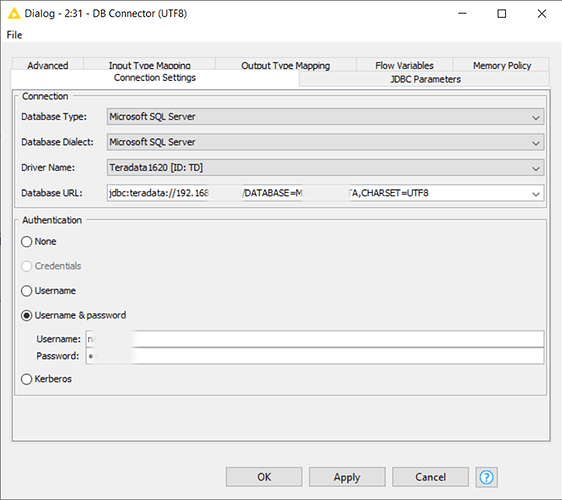

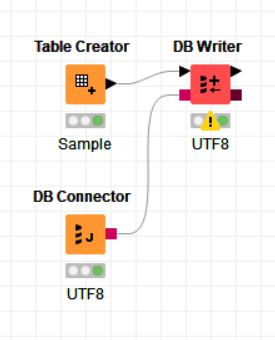

I guess I was able to reproduce the problem. I used the DB Connector node with the following settings to connect to my Teradata Express instance:

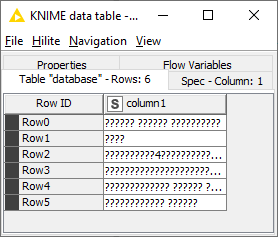

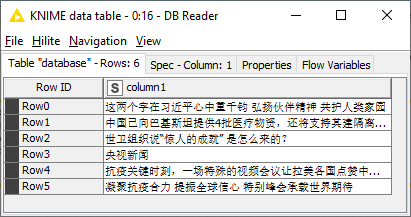

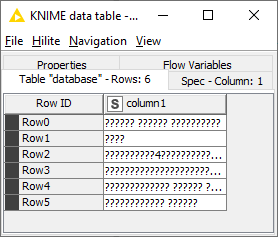

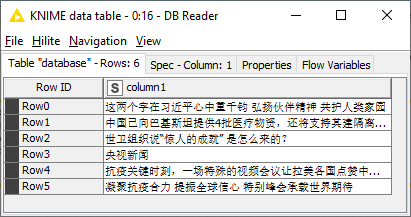

Then I tested to write and read Chinese characters to the database but also just got this back:

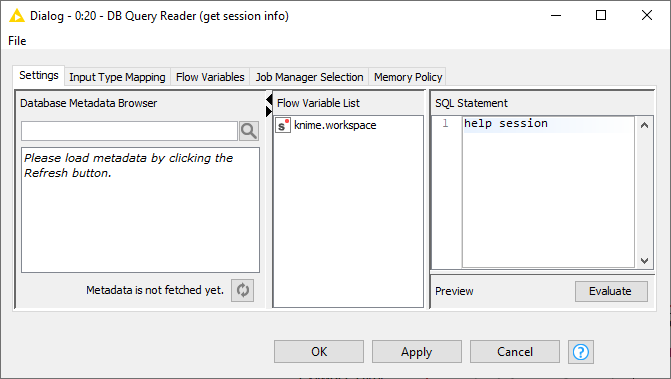

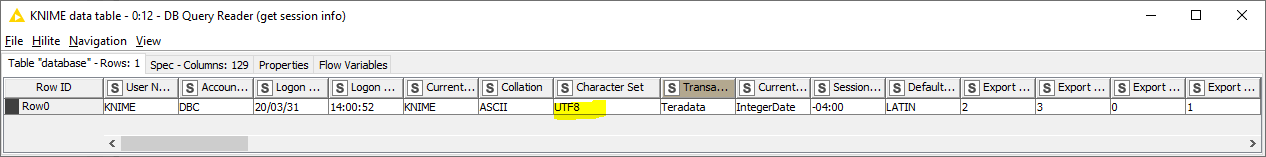

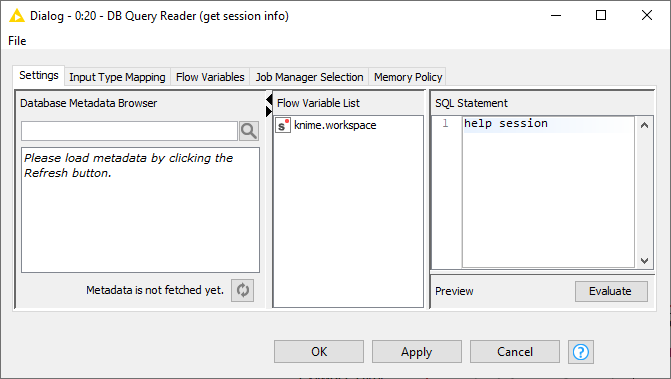

So I check the session character set by executing the help session statement via the DB Query Reader

which still returned ASCII as character set

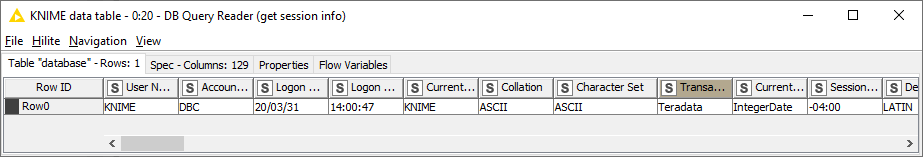

So apparently the Teradata JDBC driver ignores the CHARSET parameter when passed in to the driver as properties object. So I changed the bold JDBC parameter settings in the Advanced tab of the DB Connector node to have KNIME append the parameters to the URL starting with ‘/’ and separated by ‘,’ instead of passing them on as properties which is the standard way for JDBC

Hurray, I finally could write and read Chinese characters into my Teradata database

Checking the session character set by executing the help session in the DB Query Reader confirms that now the session uses UTF8

So please make sure that you either append the parameters to Database URL in the Connection Settings tab or if you have already specified them in the JDBC Parameter tab like I did change the settings in the Advanced tab as shown above. If you still have problems use the DB Reader node to execute the help session statement to check that the DB session is really using UTF8 as character set.

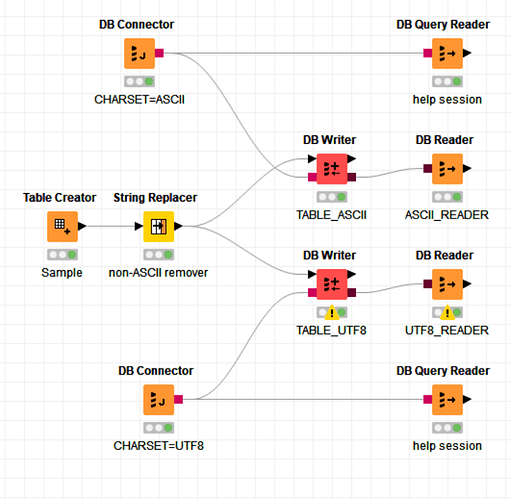

Attached you can find the workflow I used for testing.

Bye

Tobias

WriteChinese2TeradataExpress.knwf (44.7 KB)

3 Likes

Thank you @tobias.koetter for the effort!

However I am still not able to resolve the issue.

I have already been using the JDBC parameters in the Database URL as you advised. The DB Reader node with help session suggests that the connection is actually using UTF8. However I still get the same error.

Please see my workflow attached.

When I use CHARSET=ASCII, i get no error, but the characters with code>128 are replaced with ?. When I use CHARSET=UTF8, i get error for characters with code>256.

For now as a work around, i have used a String Replacer node to remove the characters with code>256. However I am hoping for a fix.

Thank you and Regards,

Nauman

nonASCII_characters_TD_knime.knwf (32.5 KB)

Hello Nauman,

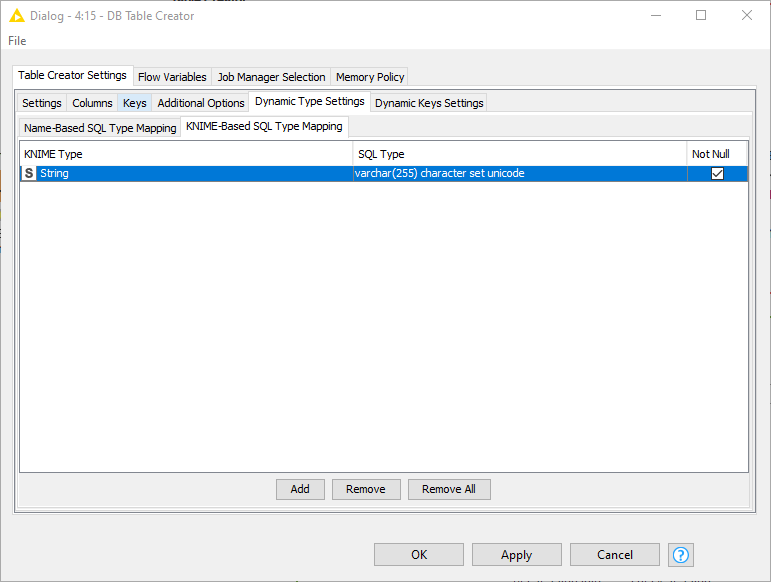

are you also creating the table with the DB Writer node? If this is the case can you replace the DB Writer node with a DB Table Creator node and a DB Insert node and make sure that for the KNIME type String KNIME should create database columns with the data type

varchar(255) character set unicode

To do so open the node dialog of the DB Table Creator node and go to the Dynamic Type Settings->KNIME-Based SQL Type Mapping tab and configure it as shown here:

The DB Writer uses the default data type which is for string columns varchar(255).

For more details see the documentation.

Bye

Tobias

3 Likes

Thank you @tobias.koetter, setting the SQL Type to ‘varchar(255) character set unicode’ in DB Table Creator did the trick!

Regards,

Nauman

1 Like

Great that it works. Closing the topic.