Hello,

I can’t help myself, so I’m turning here.

I have a table which has 97 columns and 12 000 000 rows.

I am working on a computer which has 32 GB RAM for Knime 4.7.7 I have dedicated 24 GB RAM.

The table contains historical changes i.e. each record is defined by one ID.

The workflow works for me but unfortunately it takes three days , for one above table . I have 100 such tables. Of course not all tables have so many columns and so many rows but still it is a larger number of tables.

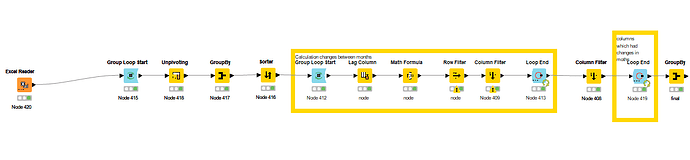

Unpivot is good but my idea is to take each column in a cycle and evaluate it separately and at the end of the cycle combine it into one table .

The idea is to find out the most changing values in a given columns over a certain period.

Any idea?

Marek

Hi @MarekV ,

also loops can impact a lot on performance.

Any chance you can give us a look at the workflow/data and what happens inside to grasp the logic?

Have a nice day,

Raffaello Barri

Hi,

I am happy to upload and I had prepared .

Unfortunately I not allowed to upload it ![]() due to company policy only JPEC or PNG.

due to company policy only JPEC or PNG.

Marek

What if you upload the worflow with some dummy data?

RB

Hello @lelloba ,

i have prepared workflow with dummy data.

Marek

unpivoting in different way.knwf (110.6 KB)

Hi @MarekV,

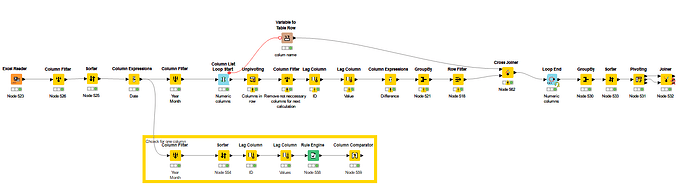

here’s a workflow I’ve prepared, hope it helps.

- I noticed there’s several string columns you cannot evaluate with the diff formula: I’ve excluded them from the input node

- I’ve included some garbage collector nodes to save space

- I’ve included a column list loop to process columns one by one

- Tested with 10M rows and a 12GB dedicated RAM and works in faster time (one hour roughly)

Tell me if it works for you!

Have a nice weekend,

Raffaello

HI @lelloba

Thank you very much for your answer.

You are using version 5.2.1, I am using version 4.7.0.

Node Run Garbage Collector cannot be loaded in version 4.7.0. I assume that Node is only available from version 5.0.

As for the workflow itself. I think we didn’t quite understand each other.

The table stressTestDef.table is unreachable for me. It would probably be necessary to promote it somehow. The path you mentioned doesn’t work for me.

At least according to the string, it is not possible to perform comparisons at the string level. Ok I threw out all the text items from my spreadsheet. But I also need to use them to compare strings.

In my table there were records for only one ID, namely 23. I didn’t want to mix other IDs and especially to make it clear that the ID is unique and unchanging within one cycle.

The goal of the whole exercise is to find out the number of monthly changes on single columns. Giving an example, if the text “Surname Name” is listed in the “NAME” column for 10 months, then within 10 months the change is 0 and this is not counted anywhere. But if in the 11th month in the “NAME” field there will be a change of “SURNAME NAME”, then in the eleventh month there will be a change of 1. This principle is preserved overall for all columns.

That’s why I don’t understand why you use lag twice?

I’m only interested in the values in the column. I am not interested in changing the name of the column, because I do not expect to change the name of the column and at the same time I will not compare two different columns that have no connection.

I’m not sure if I explained it correctly.

But I need to anlyze your workflow detailed . I let know tomorrow. Probably also mistake is on my site.

Hi @lelloba ,

so I’ve looked at your solution and I have to admit that I like it and it’s also functional.

With the help of you and chatGPT I have modified it so that it fits me overall.

I admit that the problem was complicated at least I think so. I will now check the results for a few days.

Unpioting a table of 97 columns and 12 mil records took me 3 days.

With your solution, it took 3.5 hours. That is incomparably a very good result. I also included text comparison, not just numbers so now it’s complete thank you very much.

I was still using the loop just by rows which I’m used to but using the loop within columns I never did.

Attached is the final workflow.

Marek

unpivoting in different way marek v2.knwf (155.1 KB)

This topic was automatically closed 7 days after the last reply. New replies are no longer allowed.