You are welcome. I did noticed a difference but that is neglectable. I compared my MacBook Pro (16 GB memory, 1 TB SSD, Quad Core i7 2,6) vs. AWS WorkStation of the type “Power” (4 vCPU, 16 GiB memory).

The workflow I compared is little complex, > one thousand nodes, and processes files of different size each with 60k to 300k rows and 20 to ca. 1.5k columns. So a fast spectrum

The “penalty” is around 20 to 30 %. Keep in mind that IOPS and overall throughout are crucial. AWS WorkStations use EBS volumes with a significant lower throughput than some SSD on the machine in front of you.

EBS of the type gp2 have a max. throughput of 250 MB/s per volume. The SSD on my machine is claimed to be at least two times faster.

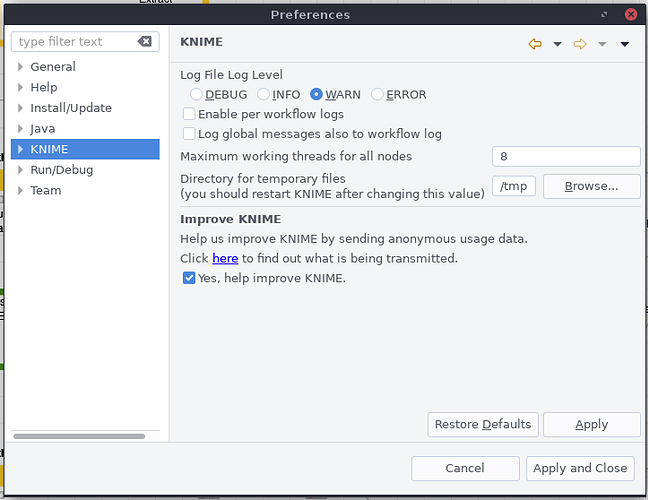

Parallelism, especially when overdoing it, can easily drain IOPS / throughput. A fairly old study (2007), don’t take it as a reference, suggested to not run more than 4 threads.

My personell experience tells me that it’s about the balance of cores available that determine the parallel threads, memory required during execution and IOPS / throughput. In 90 % of all situations it’s about proper workflow design. Always challenge yourself if that can be done differently or ask “what does this and that cause to the system”.

Hardware wise I recommend to have one external SSD for data storage. A second fast SSD to save the workspace on so it’s separated from the OS. 16 GB of memory allows you to run even Chrome alongside Knime (I do so on my MBP!).

The C5 instance are nice because you’ve not got to wait for CPU credits to build up or do some fancy burst mode calculation to keep expenses in check. Though, bottle neck is, like pointed out before, the EBS volume. Maybe worth to scale down and test with provisioned IOPS. Amount of cores are not that important, eight are plenty.

Update: Recently discovered something strange Knime core files consuming lot of space