In my project, I connect to a number of databases at many places in my workflows. The decision which database to connect is in many cases made in dependency on a particular context. My workflows at some points figure out the source of the data to work with is in a particular database. Also, they figure out which is a target database, where to write the result data. I hope I’m clear.

In KNIME 3.7, I solved this by introducing a Wrapped Metanode Template. The Metanode has got a single input - an identifier of the data source to connect to and single output - a database JDBC connection (red rectangle). There is a logic in the Metanode that reads an external configuration .properties file and extracts the right connection parameters using data source identifier as a key.

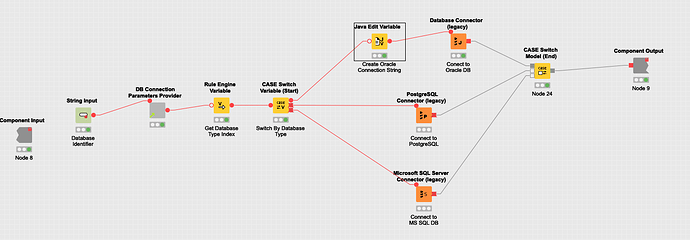

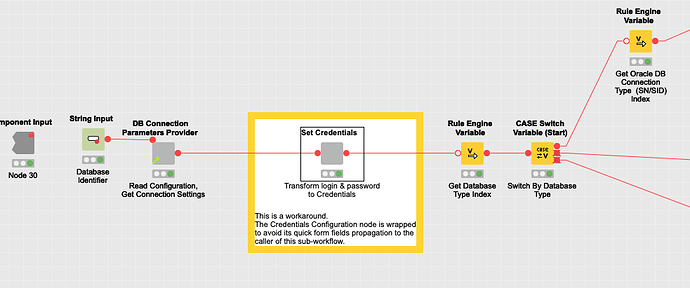

This is a content of the Wrapped Metanode my workflows use in order to obtain a database connection:

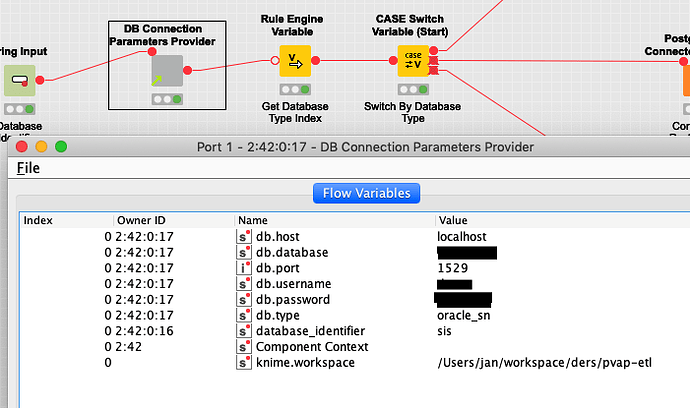

As you can see, a caller puts an identifier of the Datasource it wants to connect using Quick Form configuration. After that it the logic reads a configuration and extracts connection parameters. The output of the Connection Parameters Provider looks as follows:

A subsequent logic decides which type of DBMS to connect to and, in case of Oracle DB, whether to use a SID or a Service Name. After that, it attempts to connect and returns the connection to the caller.

I have spread this solution all over my project and it used to work like a charm.

After I upgraded to KNIME 4 I learnt the whole DB/Database node group, containing Database Connector, Database Reader and many other nodes I’ve been using so far, had been marked “legacy”. Well, I started to elaborate on how demanding it would be to migrate to the nodes KNIME encourages me to use.

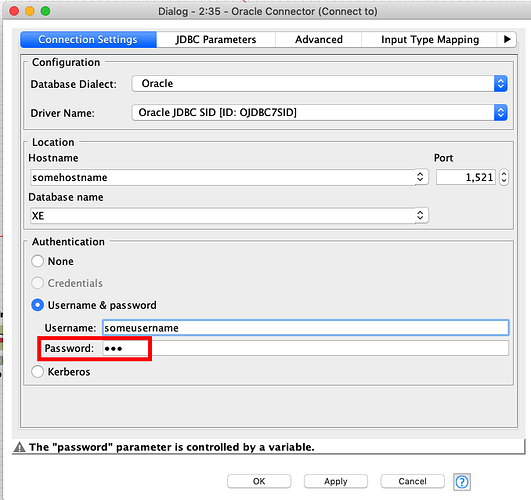

What revealed shortly after the upgrade, was it’s really complicated to set up DB connectors (Oracle Connector in my case) with a password obtained at runtime from an external configuration. Well, first I figured out it is not possible to configure an Oracle Connector with a password a flow variable holds. I’d guest the node simply ignores there it is configured to read a password from a flow variable. I proved this having flow variable based password configured and setting up a password in node configuration at the some time. If I used a correct password in the form, it connected and it doesn’t if I used wrong one. So the flow variable value was ignored completely.

Please note, there is a message The password parameter is controlled by a variable. I can feel a foreboding it could relate to an expected type the flow variable holding the password but I don’t have a clue how to manage this.

Well, I introduced credentials to my workflow and I configured it with a username and a password obtained from configuration. And it worked. Great.

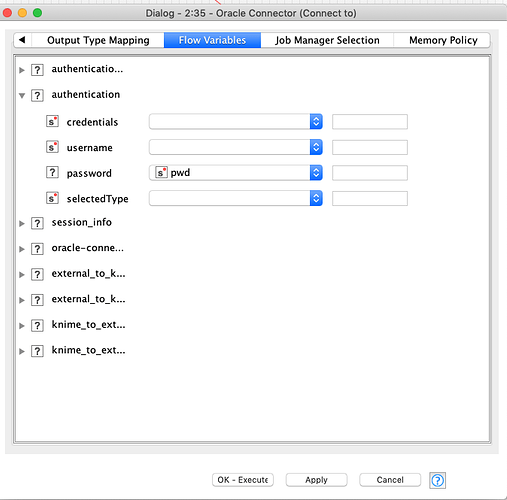

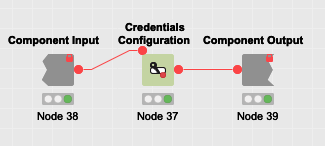

Here you can see what I developed.

More precisely, it worked until I made the component (which is a descendant of wrapped metanodes as I’ve learnt) shared (which stands for former saving a wrapped metanode as a template) and made a new workflow use it. It didn’t work there. I didn’t connect. The password was blank. Uh. I had I look inside the component and I figured out the password flow variable is not configured to hold the password anymore.

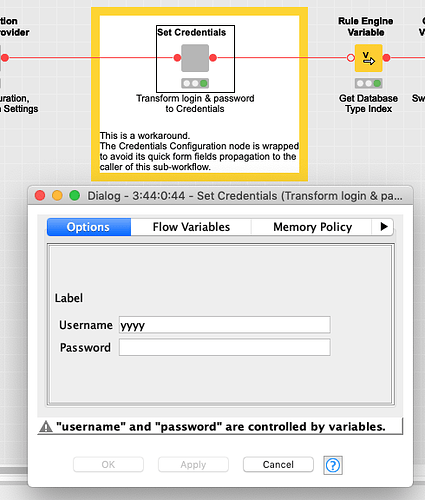

Here you can see the password and the username are be controlled by a variable. I made this screenshot at the place I developed the component.

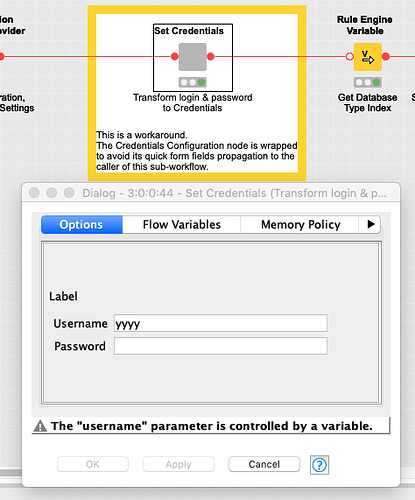

But here:

Just the username is controlled by a variable, not the password anymore. This screenshot is from the workflow I dropped the shared component.

Well, the behavior of a shared component differs between a place the component was created and a place it was inserted obviously differs.

Now, back to my question. I need a DB Connector provider capable of providing me with DB connections to a database instance and a schema it receives as an input. I need a configurable solution because particular database instances will differ between clients and environments (development, test, acceptance, production). Any suggestion?

Thank you and sorry for such an extensive description.