Hi all, I am new Knime user, I am trying to get more or less 18000 records from Salesforce by using your Workflow tutorial. I can get only 2000 records at the first call. The response is to make another call by using a nextRecordsurl for the call (https://developer.salesforce.com/docs/atlas.en-us.api_rest.meta/api_rest/dome_relationship_traversal.htm).

I used it for the next GET REQUEST in order to take the last part of records btw when I try to take all the records my result is still the previous 2000 records and I can’t get the last part of dataset. Anyone can help me? Thanks, best, E.

Hi,

Extract the “nextRecordsUrl” value separately then create the new request URL in a string manipulation node using this expression:

join("https://yourInstance.salesforce.com", $nextRecordsUrl$, " -H \"Authorization: Bearer token\"")

Be careful to replace “yourInstance” and “Bearer token” values with your own values.

Then use this new URL in a Get Request node and get the next part.

You can finally concatenate the results.

Many thanks, I will try as you suggest.

Hello again,

I would like to genertae a loop to get all the request, could you suggest which ‘Loop node’ is better? I am trying to use the ‘Table Row to Variable Loop Start’ , btw I could get only the first 2000 requests… Many thanks again! E. p.s. is there any example on it?

I think you don’t need to use loop there. After getting the responses for all the requests, then you can use a Chunk Loop Start to extract data from each response separately.

Let me know if I’m missing something.

Many thanks, If I don’t use a loop I must do a lot of calls… do you think I can overcome this problem with a loop? I mean is there a way to do a loop on the requests without overwrite? and without doing a lot of calls? Maybe if I introduce a java snippet node between the Get request node? many thanks!!!

is there any example?

Hi,

I’m not using Salesforce, but this sounds like pagination I know from other APIs. For this, I found the Recursive Loop Start to be the most useful loop to collect the data from the first page until the last one.

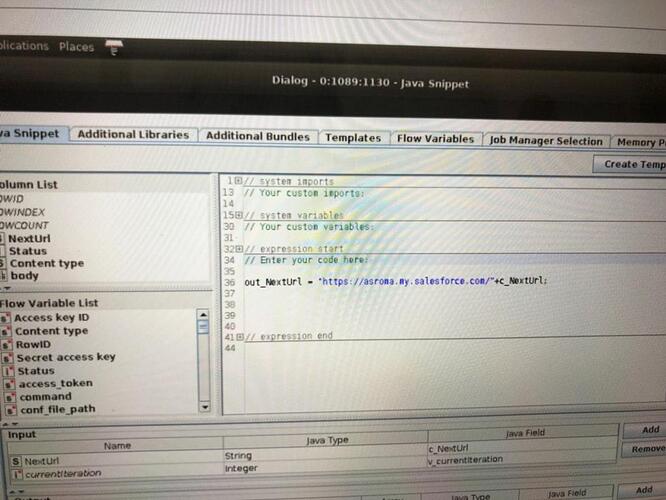

Start with the Recursive Loop Start (input is your table with the first call). Fetch your data with GET Request and include the data in a KNIME table (e.g. with JSON Path). Make sure you include a column with the nextRecordsUrl, and use a Column Splitter node to seperate the data you are interested in from the next URL. The data go to the upper inport of the Recursive Loop End. With the next URL info, first use a Row Filter to remove empty values, then create the next call (e.g. as you have shown in the Java Snippet), rename the column so that it has the same name like the original call (otherwise the GET request node will not use it in the next iteration). Then connect it to the lower inport of the Recursive Loop End. The Recursive Loop End needs to be configured in a way, that it stops after the last request. For this, make sure you have “Minimal number of rows” set to 1 (in combination with the Row Filter to remove empty values this will end the loop when there is no more “next” URI. To check if it works, and you don’t end up with an endless loop, you can also set a maximal number of iterations.). Also make sure you don’t activate the “Collect data from last iteration only” option.

I hope this helps!

Eloisa: Can you update us? Where you able to get more than 2000 records? Can you publish a workflow?

Dear all, by the moment I just called each request and used a chunk loop start. If I will find a new way I will let you know  many thanks!

many thanks!

Hi all,

try this…this is a flow that take a query, than in a meta-node make a loop writing in a temporary file the nextRecordsUrl. the metanode returns the complete result of the initial query.

SALESFORCE EXAMPLE.knwf (63.5 KB)

Hello!

Just adding info that now it is possible to connect to Salesforce using native nodes. See below topic for more:

Br,

Ivan