@USCHUKN1ME I tried to build a system you might be able to expand and use.

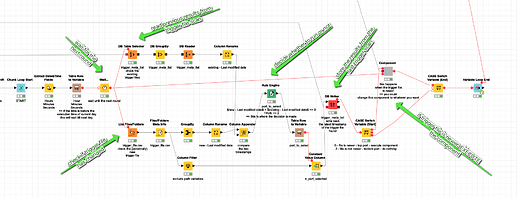

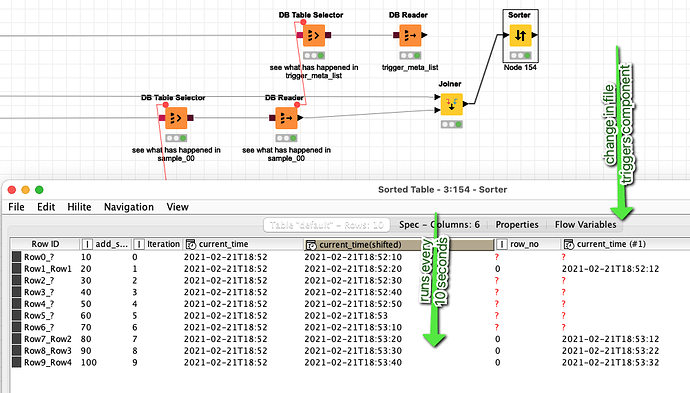

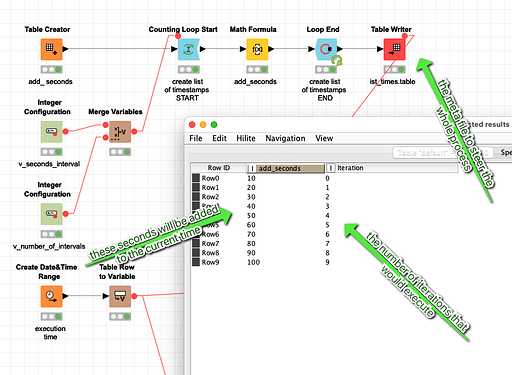

The idea is to have a workflow that would iterate a number of times (v_number_of_intervals) with a set interval (of seconds) between (v_seconds_interval) them. The workflow looks for a trigger_file.csv if this file has changed from the last time the loop has run a component would be executed. If the file has not changed nothing would happen.

Admittedly not the most elegant way but you could build a small automatic execution machine with this as long as your workflow does run.

You can monitor the effect. Every time the trigger file changes the component gets executed. Here there are 10 iterations. At the second iteration the component executes, then nothing happens and then at the iterations 7, 8 and 9 the file has changed and the component executes again (the changes are simulated in the m_001 workflow - in a real live scenario they would occur by outside factors).

This might serve as an example how such a thing could work. Obviously it would need some work and one can debate if this is the best way to go forward. I would recommend using the KNIME server instead

(please download the complete workflow group)

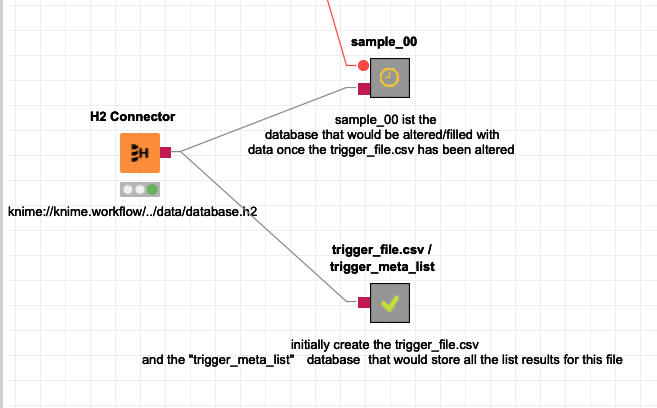

This job m_001 is there to prepare the setting and simulate the changing tigger_file.csv

You have three components:

- trigger_file.csv - a simple CSV file that would change over time (a new delivery might be send to a folder)

- trigger_meta_list - a small database that would store all the scans of the trigger_file.csv so you could see if something has changed

- sample_00 - a dummy database in the same H2 database where you would either do something (if the trigger file is newer= or not